Best For

GPT Image 2

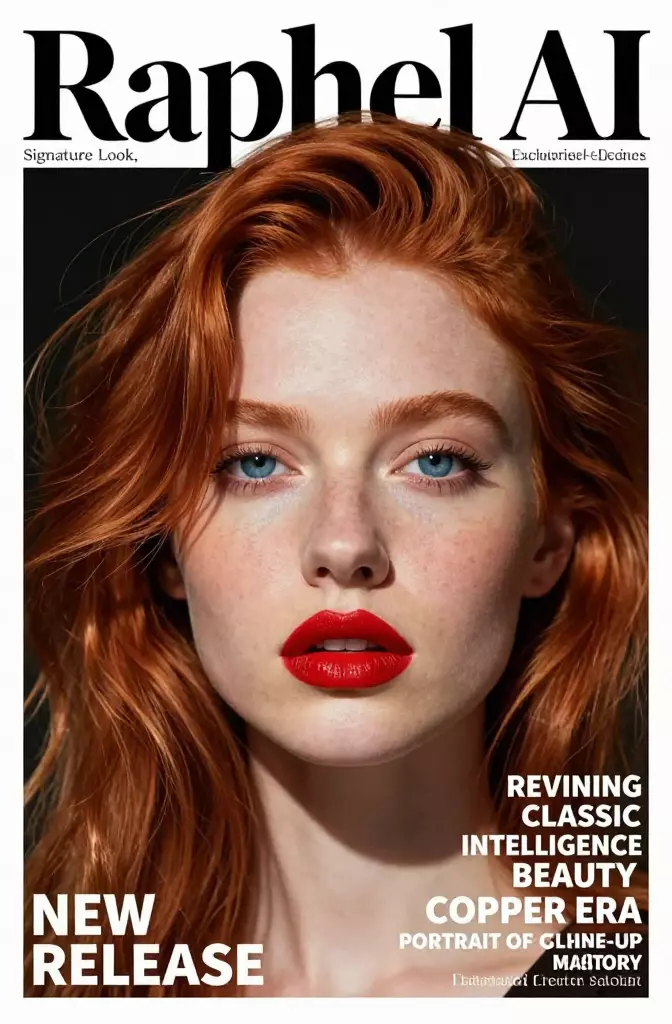

The best choice when you want a current OpenAI-native image workflow with strong editorial ambition, practical editing, and a simpler decision tree.

Marketing creatives, typed assets, editorial layouts, and teams already standardized on OpenAI tooling.

Strengths

- OpenAI currently positions it as the flagship image model for fast, high-quality generation and editing.

- OpenAI's launch examples suggest real emphasis on posters, brochures, notebook visuals, and other layout-sensitive assets.

- A cleaner one-model starting point for teams that value simplicity as much as raw capability.

Tradeoffs

- Less explicit vendor messaging around deep multi-reference composition than FLUX.2.

- You still need strong prompt structure if the brief involves typography, strict brand rules, or careful hierarchy.