Best For

GPT Image 2

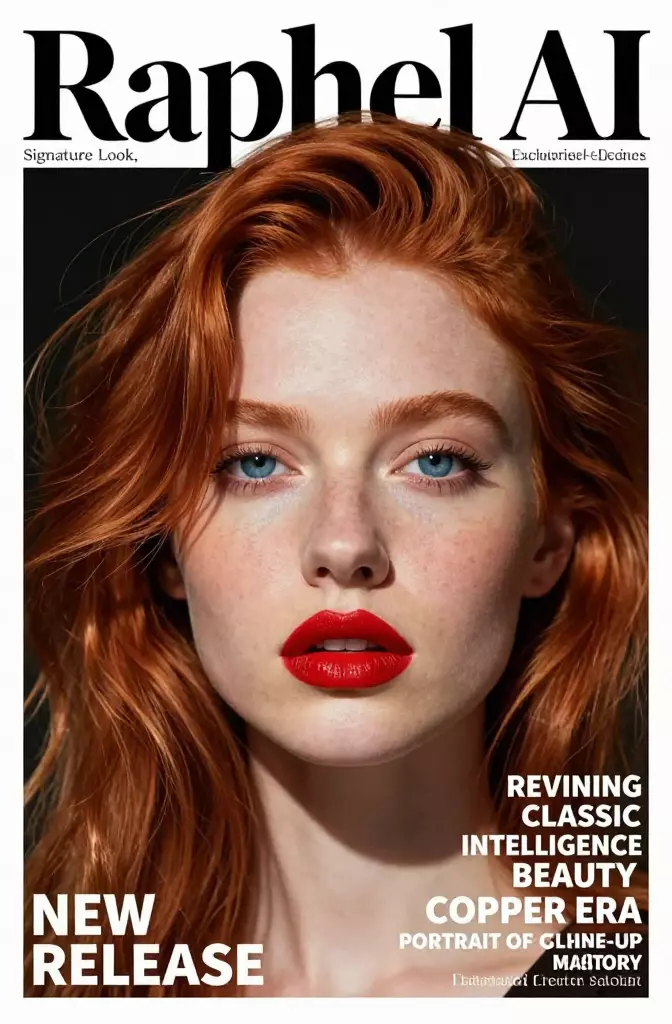

OpenAI positions GPT Image 2 as its current high-end image model for fast generation and editing. It is the cleanest starting point when you want one OpenAI-native model for both creation and revision.

Editorial layouts, typed assets, brand visuals, and image editing inside an OpenAI-heavy stack.

Strengths

- OpenAI's current model page frames it as a state-of-the-art image model for fast, high-quality generation and editing.

- OpenAI's launch examples lean into notebook pages, multilingual posters, brochures, manga pages, and stylized art that all reward instruction following.

- A strong fit when usability, hierarchy, and readable composition matter as much as visual polish.

Tradeoffs

- Teams with highly specific multi-reference needs may still prefer a specialist model family with more explicit reference-control features.

- You still need disciplined prompting for typography, layout, and brand constraints. The model is not a substitute for a clear brief.