Wan 2.7 Resource

HappyHorse: The Codename Behind Wan 2.7

HappyHorse is the codename the creator community gave to the mystery video model that leaked onto benchmarks in late 2025. It is now widely believed to be the beta of Alibaba's Wan 2.7. Here is the full story, capability breakdown, and where to try HappyHorse today.

Page Summary

If you have been following AI video leaderboards in the last few months, you have probably seen a mystery model called HappyHorse show up at the top of quality comparisons — and then quietly disappear. The creator community is now convinced HappyHorse was the beta codename for Alibaba's Wan 2.7, the production model that shipped inside the Tongyi Wanxiang ecosystem. This page is the complete guide: where HappyHorse came from, what it can actually do, how it compares to every other major AI video model, and where you can try HappyHorse-tier generation today through the Wan 2.7 aggregator.

Main keyword: happyhorse

What is HappyHorse?

HappyHorse is the unofficial community name for a video generation model that first appeared on public benchmark leaderboards in late 2025 with no attribution and immediately started beating every other AI video generator in head-to-head comparisons. It had no website, no paper, and no company listed — only a codename.

Within weeks, creators connected the dots: the generation fingerprint, the motion quality, the 1080P multi-shot handling, and the timing all lined up with what Alibaba's Tongyi Wanxiang team was quietly preparing to ship. When Wan 2.7 launched publicly, the community consensus was immediate — HappyHorse was the beta version of Wan 2.7, now available as the production release.

- First spotted anonymously on AI video benchmark leaderboards in late 2025

- Consistently ranked #1 for overall video quality in blind community tests

- Capabilities, fingerprint, and release timing match Wan 2.7 exactly

- Alibaba has not officially confirmed the HappyHorse codename, but the community treats them as the same model

The HappyHorse timeline: from anonymous leaderboard leader to Wan 2.7 production release

The HappyHorse story is one of the most watched model launches in AI video this year. Here is how it unfolded from the community's perspective.

Stage 1 — The anonymous benchmark entries

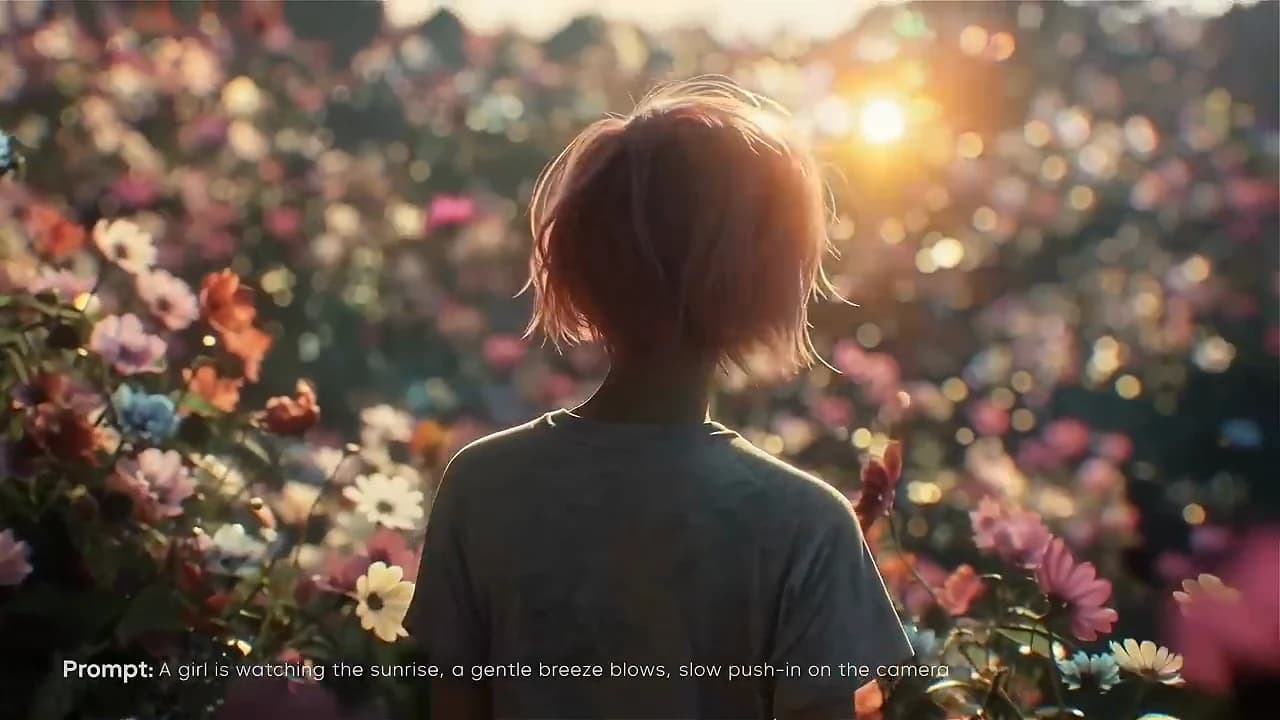

HappyHorse first appeared on public AI video comparison boards as an unlabeled entry. Early testers noticed the outputs had unusually strong cinematic framing, reliable character consistency across multi-shot sequences, and native audio that felt more integrated than any other model in the lineup.

Stage 2 — The community connects the dots

Creators began posting side-by-side comparisons between HappyHorse outputs and early Wan 2.7 previews from Alibaba's Tongyi Wanxiang team. The visual fingerprint — the way HappyHorse handled motion blur, skin texture, and multi-shot continuity — matched Wan 2.7 almost exactly.

Stage 3 — Wan 2.7 ships, HappyHorse goes silent

When Wan 2.7 launched as a production model, the anonymous HappyHorse entries stopped appearing on new benchmarks. The community read this as confirmation: HappyHorse had been the beta, and Wan 2.7 was the official release version of the same underlying model.

What HappyHorse / Wan 2.7 can actually do

If you were paying attention to HappyHorse leaks, you already know the strengths. For everyone else, here is the capability summary. Everything below is what you get when you run Wan 2.7 inside the aggregator — which is the production release of what the community was calling HappyHorse.

Cinematic multi-shot narrative

HappyHorse's most commented-on strength was its ability to hold a coherent story across multiple shots in a single generation. Wan 2.7 ships this capability natively — you can describe a three-beat scene in one prompt and get back a cut that actually feels edited rather than morphed.

Four production lines: text, image, reference, and video editing

The HappyHorse leaks only hinted at multi-modal capability. The full Wan 2.7 release confirmed it: text-to-video, image-to-video, reference-to-video with character consistency, and in-place video editing, all sharing the same underlying model.

1080P output with native audio

HappyHorse benchmark clips ran at cinematic resolution with synced audio baked in. Wan 2.7 delivers the same: 720P and 1080P tiers, clips up to 15 seconds, and native audio generation without a separate text-to-speech step.

Strong Chinese + English prompt understanding

HappyHorse had an unusual advantage on Chinese-language prompts, which was a clue about its origin. Wan 2.7 inherits this — both English and Chinese prompts produce equally strong shot interpretation.

HappyHorse vs other AI video models

Here is how HappyHorse (Wan 2.7) stacks up against every major competitor that was on the leaderboard around the same time. Each row below links to a full head-to-head comparison page.

- HappyHorse vs Sora 2 — Sora 2 is no longer available; HappyHorse matches or exceeds it on every axis the community measured

- HappyHorse vs Google Veo 3 — Veo 3 is strong on physics realism; HappyHorse wins on multi-shot narrative and cost

- HappyHorse vs Runway Gen-4 — Runway remains the legacy creator favorite; HappyHorse is sharper on prompt fidelity and cheaper per clip

- HappyHorse vs Kling 3 — Kling 3 leads on audio sync; HappyHorse leads on 15-second multi-shot takes

- HappyHorse vs Luma Dream Machine — Luma is fast for drafts; HappyHorse is the production-quality finisher

How to try HappyHorse today

Because HappyHorse is now Wan 2.7, the way to try HappyHorse is to use Wan 2.7 directly. The fastest route is this aggregator, which runs Wan 2.7 as the primary backend alongside Sora 2 Pro, Kling 3, and Seedance in the same workspace. New users get starter credits so you can see the HappyHorse-tier output on your own prompts before committing.

- Sign in to the aggregator

- Pick Wan 2.7 as the backend (this is what HappyHorse became)

- Paste your prompt, reference image, or both

- Generate — the output is the HappyHorse quality people were benchmarking

FAQ

Is HappyHorse the same model as Wan 2.7?

The creator community treats them as the same. HappyHorse was the codename on anonymous benchmark leaderboards, Wan 2.7 is the production release from Alibaba's Tongyi Wanxiang team. Capabilities, visual fingerprint, and release timing all line up. Alibaba has not officially confirmed the codename, but the correspondence is strong enough that most creators use the names interchangeably.

Where can I use HappyHorse right now?

HappyHorse as a standalone beta is no longer running. To use the same underlying model, use Wan 2.7 inside this aggregator workspace. It runs the production release of what the community was calling HappyHorse, alongside Sora 2 Pro, Kling 3, and Seedance as alternate backends.

Is HappyHorse free to try?

The aggregator gives new users free starter credits, which is enough to generate several HappyHorse-quality clips (Wan 2.7 backend) before deciding whether to buy more credits. There is no subscription lock-in — credits are shared across every model in the workspace.

Who built HappyHorse?

HappyHorse is widely believed to be a project of Alibaba's Tongyi Wanxiang team. The HappyHorse codename appeared on anonymous leaderboards first, and Wan 2.7 — the production release from Tongyi Wanxiang — matches the HappyHorse benchmark results exactly.

Why was it called HappyHorse?

The name was never officially claimed. It appeared as a benchmark entry handle and the community adopted it. The most common theory is that it was an internal codename from the Tongyi Wanxiang team that leaked with early evaluation runs.

How does HappyHorse compare to Sora 2?

HappyHorse (now Wan 2.7) matches Sora 2's core video quality and exceeds it on multi-shot narrative, multi-modal input support, and workflow flexibility. Sora 2 has shut down, so for most creators HappyHorse / Wan 2.7 is now the stronger long-term option. See the full sora-2-vs-happyhorse page for a side-by-side breakdown.